Engineering the Capstone

I designed a sprint-based engineering curriculum, taught the capstone for 12 semesters since 2019, and iterated against measured outcomes.

The System

Sprint-Based Curriculum

Agile sprints with product backlog items, stand-ups, and retrospectives. Students experience the same workflow they'll use in industry.

Real Team Roles

Scrum Master, Front-end Dev, Back-end Dev, AI Dev, Documentation Specialist. Teams refine these roles over the semester to fit their project, and each student owns what they take on.

Engineering Discipline

Branching strategies, PR-based code reviews, deployment pipelines. No shortcuts — teams learn by doing it right.

Outcome Measurement

Peer reviews, quality rubrics, and end-of-semester surveys. Success is measured by what ships and what students learn — and each cohort shapes the next.

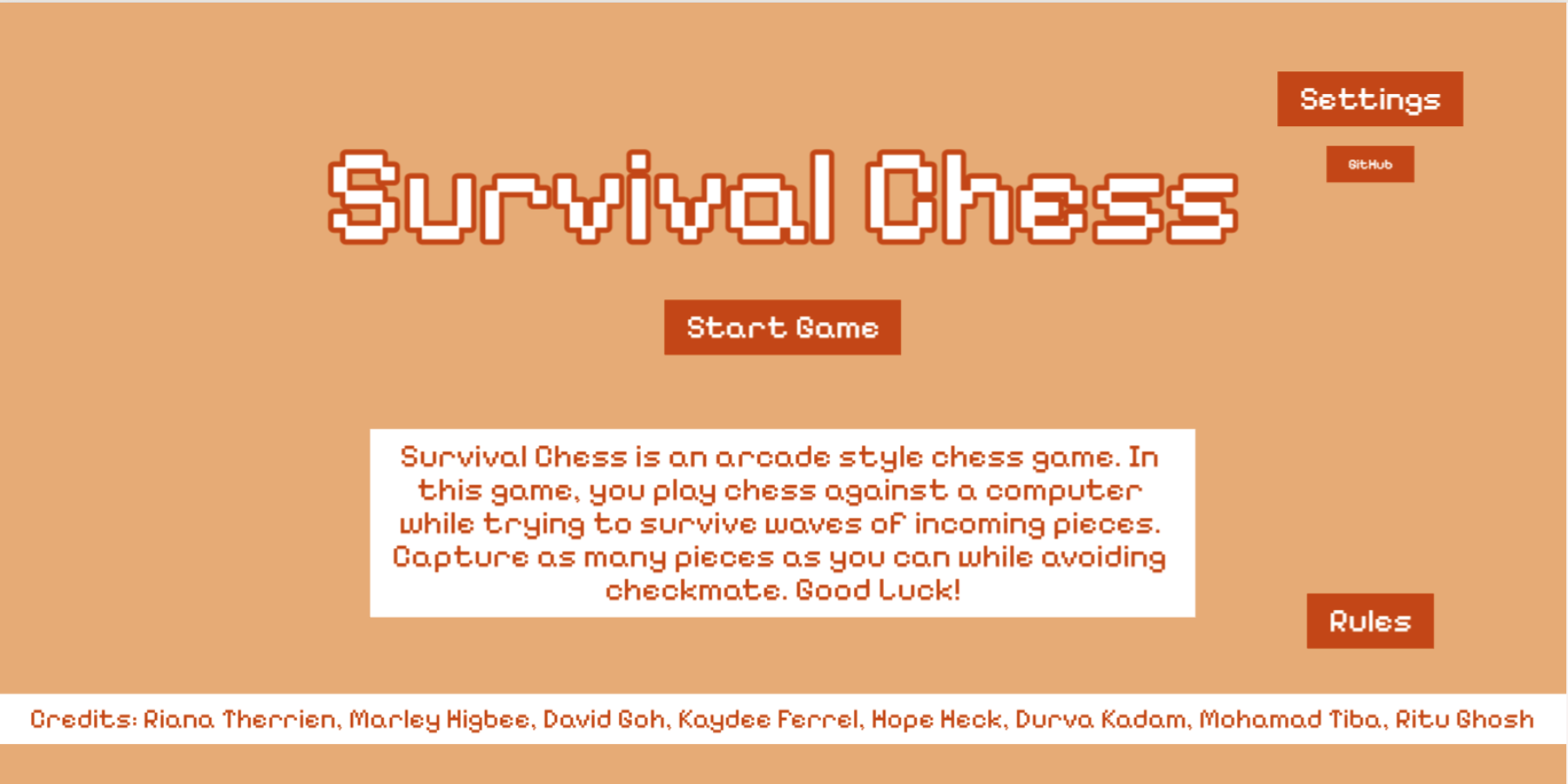

Recent Shipped Products

Four recent examples from 2025 — full-stack applications and games shipped in one semester by student teams I guided through engineering, process, communication, and final demo.

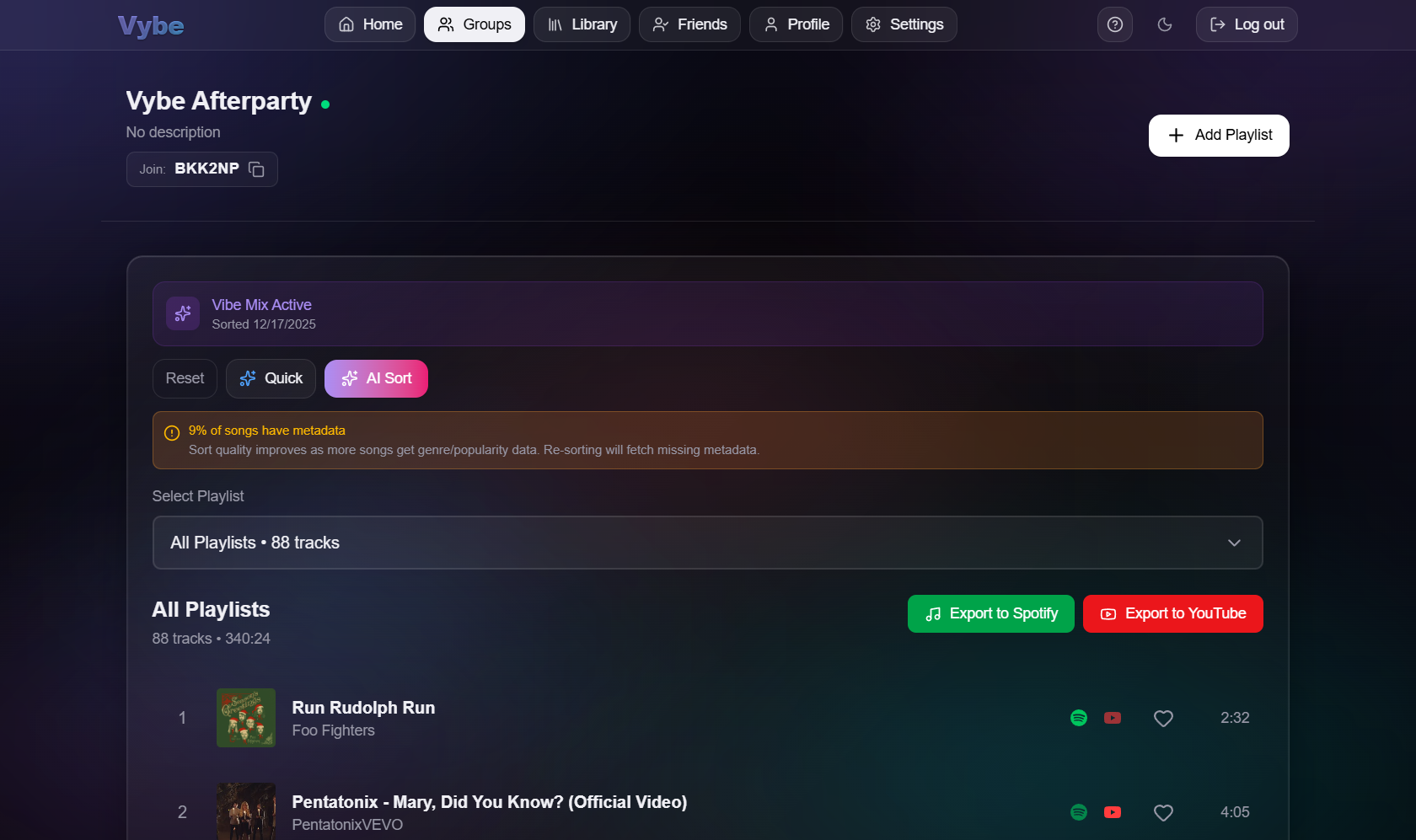

Vybe

Cross-platform music sharing app that bridges Spotify and YouTube. Shared groups, combined playlists, and Song of the Day feature.

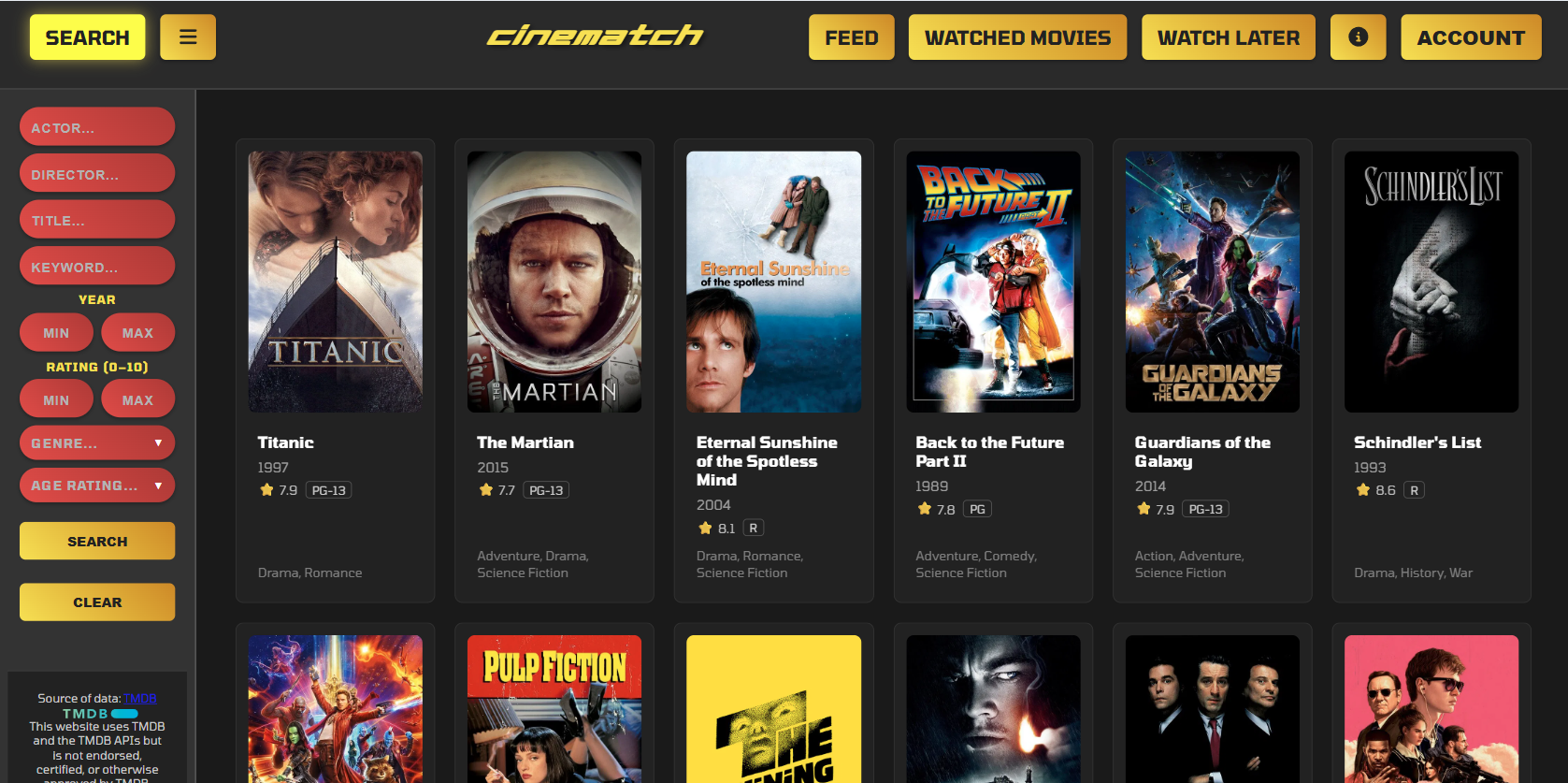

CineMatch

Movie recommendation engine that learns from user ratings. Rate movies you've seen, get personalized suggestions, save favorites.

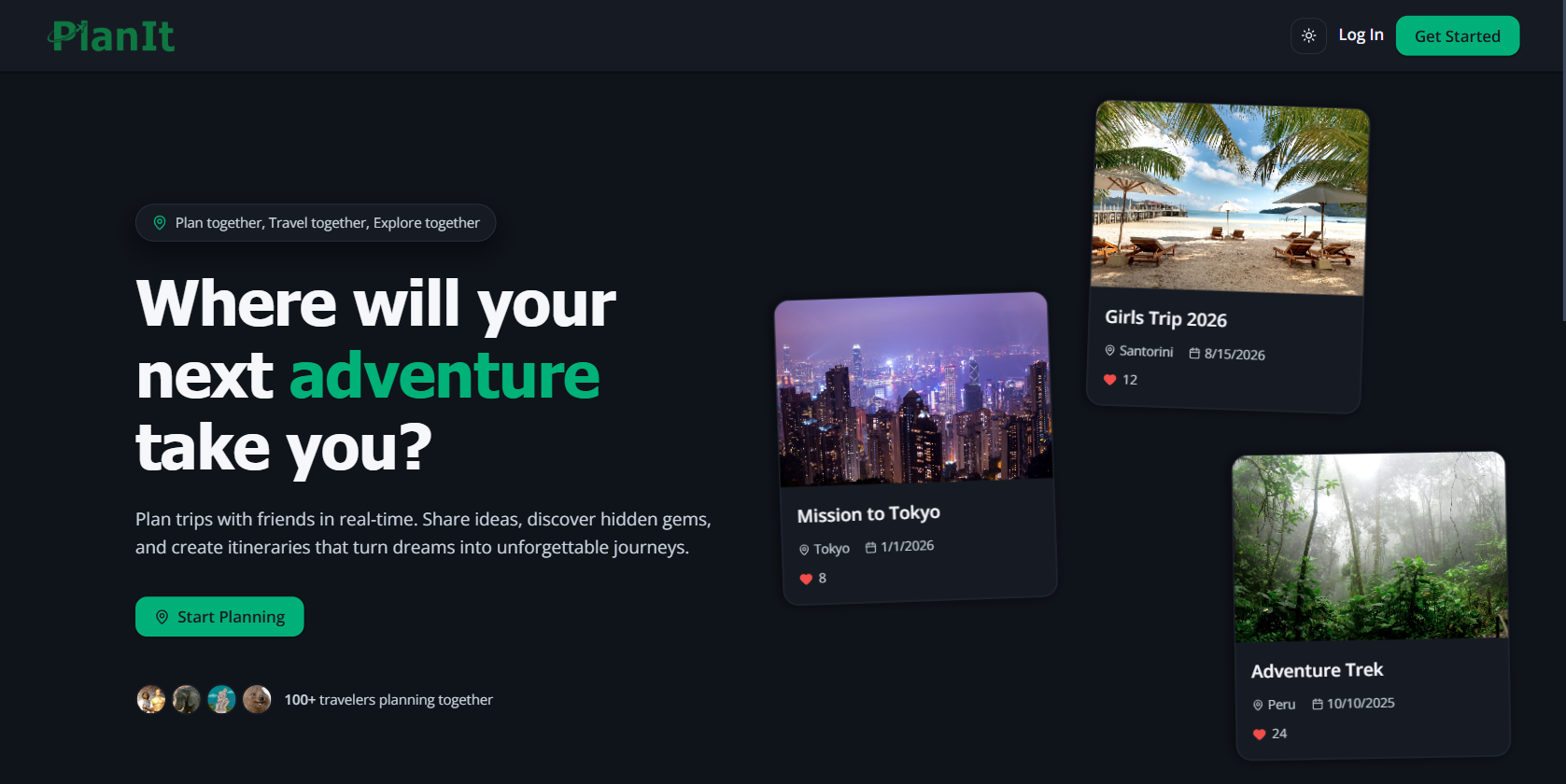

PlanIt

Trip planning web app for organizing destinations, activities, and travel times in a single interactive itinerary.

"Students don't just learn to code — they learn to ship. Each team runs sprints, manages a backlog, does code reviews, and deploys to production infrastructure."